Perlucidus Software Project Survey

Jun05,2016

Progress to Date

Since the last time I wrote about the this software project transparency survey a lot has been accomplished:- I’ve refined the survey in several ways, and added new data that is used to define how the server handles questions, when they are skipped, and what the valid answers are.

- I’ve created a dynamic survey engine on an AWS server based on templates created by RapidWeaver. The survey tool supports resuming at a later time so someone doesn’t have to finish the survey all in one sitting. Yet, it maintains anonymity. :-)

- I’ve created an initial web-based console that allows me to see how many surveys have been submitted, how many are in progress and how far they have gotten on average. It is the platform on which I’ll generate reports to show correlations or the lack thereof.

- I’ve implemented a review mode so I can invite people to review the questions. The console tool allows me to see the comments made. This won’t be part of the final survey that people will fill out. It is there to allow collaboration with others before the survey is put into use with non-reviewer participants.

- I’ve chosen a name for this project: Perlucidus Software Project Survey. In fact, I already owned the domain name perlucidus.info so I’ve been using that as the home domain for this project.

I like the idea of information itself being like a perlucidus cloud:Perlucidus clouds refer to cloud formations appearing in extensive patches or layers with distinct small gaps in between the cloud elements to allow higher clouds, blue sky, sun or moon to be seen through the gaps.

- In a location where everyone can see it

- It doesn’t eclipse other things but coexists with them in a way where more can be added and all can still be seen

- Is lightweight and doesn’t dominate the reality on the ground

And so, on my perlucidus.info AWS server I created the beginnings of the Perlucidus Software Project Survey. And, here is the review version of that web site, configured for review:

What’s next is a review of the questions themselves. I will reach out to some of my software engineer friends to help review this survey and offer feedback for improvement. Anyone is welcome to offer comments. The survey is completely anonymous, so if you want me to know who you are you will have to put your name inside of one of the review comments. Otherwise, I’ll take your comments as anonymous comments. The only downside to anonymous comments is that if I have any kind of followup question about your comments, I won’t know who to ask.

Research on Transparency in Software Projects

Throughout my development of the software and the survey data, Dr. Jennifer Rice has been thoughtfully helping me to understand how to collect useful data. Collecting data won’t be nearly as difficult as collecting useful data. The difference has everything to do with whether participants can understand a question well enough to answer it, or whether the question is flawed in some way so that the user ends up answering a question that wasn’t actually being asked. Dr. Rice provided graduate-level books and advice and example questions and encouragement and criticism and patience.Dr. Rice has also been researching published papers on related topics. One goal of that research was to find out if it was even necessary for us to proceed. If the data already exists, there may be no need. But, her search revealed nothing that would suggest our survey is redundant. Another goal of that research was to determine how other researchers have approached similar problems. She found over a dozen papers on various topics the had useful examples or methodologies or analyses. She pointed out how extensive the methodology sections of some of these papers were and suggested that for this kind of research there didn’t seem to be established standard practices (aside from practices that apply to all kinds of survey-based research).

There are other transparency-related academic papers; however, their use of the term “Transparency” has more to do with organizational style than information sharing practices as far as I can tell. Here are some examples:

- Multi-method analysis of transparency in social media practices: Survey, interviews and content analysis. (Marcia W. DiStaso, Denise Sevick Bortree, 2012)

- Organizational Transparency and Sense-Making: The Case of Northern Rock (Oana Brindusa Albu & Stefan Wehmeier, 2014)

- Organizational transparency drives company performance (Erik Berggren and Rob Bernshteyn, 2007)

- Radical transparency: Open access as a key concept in wiki pedagogy

The purpose of this article is to argue that we need to extend our understanding of transparency as a pedagogical concept if we want to use these open, global wiki communities in an educational setting.

- Radical Transparency? (Clare Birchall, 2014)

This article considers the cultural positioning of transparency as a superior form of disclosure through a comparative analysis with other forms.

Details on using RapidWeaver with Perl CGI

The survey generation based on spreadsheet data is accomplished like this:

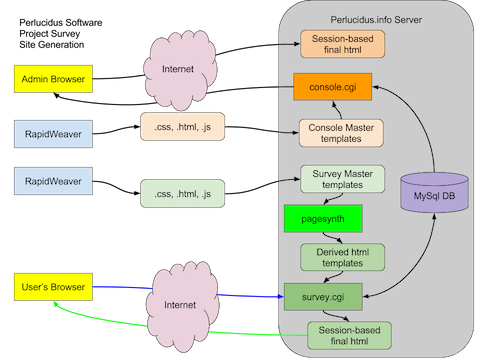

(Click image to see it full-size)

RapidWeaver produces .html, .css and .js files which I copy to the server. A tool called pagesynth (bright green box) converts the pages by processing macros in the templates that themselves are ignored by RapidWeaver. The macros add navigation, context about each question, review fields and more. The parts of the page that a dynamic web site would produce are produced by pagesynth, once, when the survey is initialized on the server. After that, the database and CGI are not involved in building those aspects of the page, which takes a big load off the server and improves performance for the user.

The result of pagesynth is a set of “Derived html templates” which are read in by survey.cgi. Survey.cgi expands a second set of macros (that are not recognized by RapidWeaver nor pagesynth). Those macros can only be expanded at runtime as they include the user’s session number. Survey.cgi reads in the template and resolves those last-minute values and includes them in the web page seen by the participant. It also records the answer if they submitted one. Survey.cgi also handles resuming the survey, and sending a link via email so a participant can resume.